Quantum Computing and Artificial Intelligence (AI)

One of the most mysterious and promising trends in the world of technology is quantum computing. Since a couple of years quantum computing has generated a lot of buzz and hype in the press, but it still fairly unknown to people what it is and what it isn’t. In this blog post I would like to unveil my perspectives on quantum computing and especially the potential impact it can have on Artificial Intelligence (AI). I’ll start with the basics by explaining the foundations of quantum computing. In the second part I will focus on what quantum computing can mean for AI by exploring several use cases. I’ll conclude with some links where to learn more about this exciting new technology.

What is quantum computing?

Quantum computing is a computing architecture based on the principles of quantum mechanics (QM) which were discovered in the beginning of the last century by people like Max Planck, Niels Bohr and Albert Einstein. QM is based on a fundamental theory in physics which describes nature at the smallest level possible in terms of atoms, electrons and even smaller particles. QM has replaced the classical physical world view, based on Newton’s laws of physics, and provides a ‘quantum lens’ to describe the world in a physical way. This opens up new and radical ways to explore and discover physical processes that are e.g. are being used in quantum computing. QM describes the world in terms of waves, fields and quantum states and the particles can behave very counterintuitively. Richard Feynman, a Nobel prize winning theoretical physicist, once said that nobody understands quantum mechanics. What we do know is that quantum mechanics does describe how nature works, so, to quote Feynman again, wouldn’t it be nice to use nature in order to simulate nature. In other words, quantum computing is especially geared to tackle some of nature’s toughest problems.

Wouldn’t it be nice to use nature in order to simulate nature”

Richard Feynman, Nobel Prize winning theoretical physicist

Qubits

The traditional computing paradigm uses bits to store and process its information. A bit can have two states: either the value 0 or the value 1. In quantum computing information is stored and processed using quantum mechanical building blocks which are called quantum bits or qubits. Qubits are of made of superconducting atoms which in can reach a quantum state in a quantum computer under very special circumstances such as a very low temperature. Qubits are processed at a temperature of 10 milliKelvin (approx. -273.14°C/ -459.65°F). This process needs a very stable environment as qubits are prone to interference from the outside world. Hence that quantum computers look like a large vacuum tube as you can see on the picture below.

An IBM quantum computer inside the Thomas J. Watson Research Center in Yorktown Heights, New York. Source: IBM

Superposition and entanglement

When qubits are in quantum state they can have different quantum properties like (quantum) superposition and (quantum) entanglement. These two concepts make quantum computer different from classical computing. Superposition refers to a combination of qubit states, in other words, when a qubit is in superposition it can have both the value 0 AND the value 1 at the same time. An analogy for superposition would be spinning a coin; whilst spinning the coin is head and tail. Entanglement is another counterintuitive quantum phenomenon in which qubits are connected (entangled) with each other and act as one system sharing properties. The basic premise for quantum computing is the amount of information you can process doubles, in other words grows exponentially, with every qubit that is added. However in order to scale quantum systems you also need to take the error rate into consideration for increasing the fault tolerance of these systems. Increasing the number of qubits and lowering the error rate are the current challenges in current quantum comping research. Big tech companies like IBM, Microsoft, Google and Amazon are all making huge investments in this area.

So what?

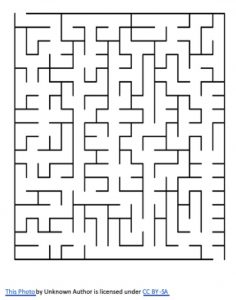

What’s the big deal about quantum computing and what are potential use cases you may ask yourself? Everyone agrees the potential for quantum computing is huge, despite the many (technical) challenges researchers are currently facing. To me the added value is the parallel exponential computing power quantum computing offers especially in use cases that are very hard for classical computers to solve. Many use cases are related to tough problems in nature like e.g. research for climate change and new drugs and require a huge amount of data that needs to be processed. The edge quantum computing has over classical computing can be compared with the maze metaphor. A classical computer would need to process one potential solution to walk the maze at a time until the maze has been solved. A quantum computer on the other hand can walk multiple potential solutions in the maze in parallel which makes it much faster. Especially in optimization use cases, like e.g. the travelling salesman problem (see below), the computing time can be reduced dramatically.

A maze

In virtually any industry, from finance to aviation, potential use cases for quantum computing can be identified. Despite major breakthroughs that have taken place over the last years many experts claim that we are still very early on in the maturity of quantum computing and some even doubt that practical quantum computers will ever be built. This section only scratched the surface what quantum computing is. If you want to learn more, you should check out the Resources section at the end of this blog and now let’s delve into how quantum computing might add value to AI.

Quantum and AI

Gartner identifies quantum computing[i] as one of the five emerging technology trends for 2019 and one of the potential areas that can revolutionize the entire IT industry. According to Gartner, quantum computing holds great promise in the areas of machine learning (ML) and AI. Data scientists cannot address key opportunities today since they run into computing limitations of classical computing architectures. “Some of these problems may take today’s fastest supercomputers months, or even years, to run through a series of permutations, making it impractical to attempt” says Matthew Brisse a VP Analyst at Gartner. For AI quantum computing can enable faster structured predictions in the area of unsupervised learning, semi-supervised learning and deep learning since it would be able to process much more data at a much faster pace. Especially use cases around optimization could benefit from this.

Optimization

Optimization problems can become very data intensive especially since the amount of options can grow exponentially. A good example to describe what optimization entails is the travelling salesman problem which is often considered the benchmark for optimization problems. The travelling salesman problem tries to answer the following question: “Given a list of cities and the distances between each pair of cities, what is the shortest possible route that visits each city and returns to the origin city?” This is an easy problem with e.g. 4 cities, but the complexity increases exponentially with each new city added. For 10 cities there already exist 181.440 different options to tour all the cities and for 20 cities it would mean 6.1*10^16 different options. In 1998 mathematicians from the University of Princeton calculated the exact solution for 15,112 cities in Germany which took the equivalent of 22.6 years of computing time, based on 1 processor. For e.g. 86,000 cities it would take 136 years Using a quantum system the computing time could be dramatically reduced. However reality has it that, to date, the travelling salesman problem hasn’t been solved by any quantum computer for various technical reasons outlined here.

Example of travelling salesperson problem in Germany. Source: Technical University Munich

Conclusion

There is huge potential for quantum computing, especially in the world of AI and ML. According to Bernard Marr, an independent AI expert and author, “The promise is that quantum computers will allow for quick analysis and integration of our enormous data sets which will improve and transform our machine learning and artificial intelligence capabilities.” However, reality has it that we are very early on the days of quantum computing. Experts say that quantum computing is in a comparable time as when the vacuum tube was introduced, which was way before the transistor and integrated circuits were invented. So, the best is yet to come and many benefits and use cases still have to be discovered. But I would argue that every practitioner in AI/ ML and every CIO should need to know the basics of quantum computing and should start exploring how what this technology can add to their organization and industry. Gartner expects that by 2023, 20% of organizations will be budgeting for quantum computing projects. They recommend, however, any CIO to focus on business value and they should expect results to be at least five years out.

Resources

There’s a ton of materials on quantum computing to be found on the internet. In this section I will share some resources that I personally used for my learning and exploring of quantum computing. If you have any thoughts, comments or tips for resources, don’t hesitate to leave these behind in the comments.

- Quantum Computing Expert Explains One Concept in 5 Levels of Difficulty. One of the best videos on quantum computing I have seen.

- IBM Q. IBM’s website on quantum computing has lots of learning materials and even allows developers to code on a quantum computer.

- Quantum Computing Fundamentals. An excellent online course from MIT in which you can learn the fundamentals on quantum computing.

- Lex Fridman makes excellent podcasts on the topic of AI. In 2 episodes he interviewed Scott Aaronson and Sean Carroll on the topics of quantum mechanics and quantum computing.

[i] Quantum computing is not mentioned as a separate category but is part of the Postclassical Compute and Comms.